Philosophy with Vector Embeddings, OpenAI and Cassandra / Astra DB

In this quickstart you will learn how to build a "philosophy quote finder & generator" using OpenAI's vector embeddings and [Apache Cassandra®](https://cassandra.apache.org), or equivalently DataStax [Astra DB through CQL](https://docs.datastax.com/en/astra-serverless/docs/vector-search/quickstart.html), as the vector store for data persistence.

Get this prompt chain

Philosophy with Vector Embeddings, OpenAI and Cassandra / Astra DB

CQL Version

In this quickstart you will learn how to build a "philosophy quote finder & generator" using OpenAI's vector embeddings and Apache Cassandra®, or equivalently DataStax Astra DB through CQL, as the vector store for data persistence.

The basic workflow of this notebook is outlined below. You will evaluate and store the vector embeddings for a number of quotes by famous philosophers, use them to build a powerful search engine and, after that, even a generator of new quotes!

The notebook exemplifies some of the standard usage patterns of vector search -- while showing how easy is it to get started with the vector capabilities of Cassandra / Astra DB through CQL.

For a background on using vector search and text embeddings to build a question-answering system, please check out this excellent hands-on notebook: Question answering using embeddings.

Choose-your-framework

Please note that this notebook uses the Cassandra drivers and runs CQL (Cassandra Query Language) statements directly, but we cover other choices of technology to accomplish the same task. Check out this folder's README for other options. This notebook can run either as a Colab notebook or as a regular Jupyter notebook.

Table of contents:

- Setup

- Get DB connection

- Connect to OpenAI

- Load quotes into the Vector Store

- Use case 1: quote search engine

- Use case 2: quote generator

- (Optional) exploit partitioning in the Vector Store

How it works

Indexing

Each quote is made into an embedding vector with OpenAI's Embedding. These are saved in the Vector Store for later use in searching. Some metadata, including the author's name and a few other pre-computed tags, are stored alongside, to allow for search customization.

Search

To find a quote similar to the provided search quote, the latter is made into an embedding vector on the fly, and this vector is used to query the store for similar vectors ... i.e. similar quotes that were previously indexed. The search can optionally be constrained by additional metadata ("find me quotes by Spinoza similar to this one ...").

The key point here is that "quotes similar in content" translates, in vector space, to vectors that are metrically close to each other: thus, vector similarity search effectively implements semantic similarity. This is the key reason vector embeddings are so powerful.

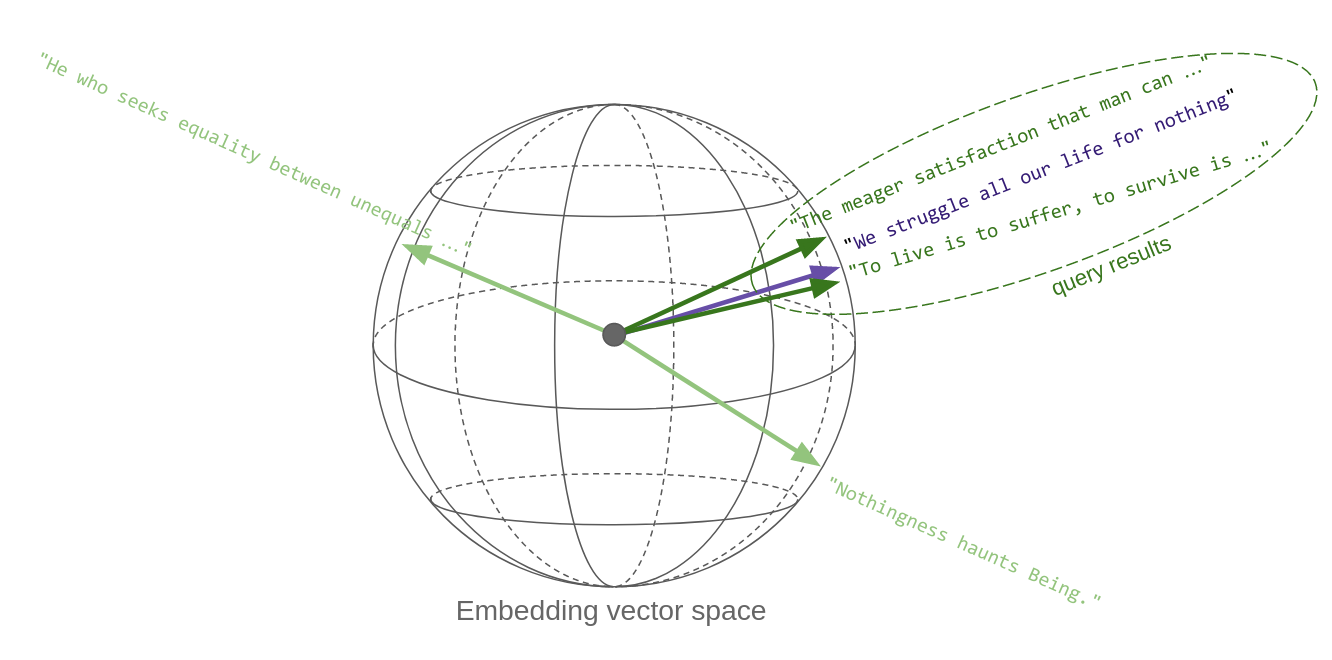

The sketch below tries to convey this idea. Each quote, once it's made into a vector, is a point in space. Well, in this case it's on a sphere, since OpenAI's embedding vectors, as most others, are normalized to unit length. Oh, and the sphere is actually not three-dimensional, rather 1536-dimensional!

So, in essence, a similarity search in vector space returns the vectors that are closest to the query vector:

Generation

Given a suggestion (a topic or a tentative quote), the search step is performed, and the first returned results (quotes) are fed into an LLM prompt which asks the generative model to invent a new text along the lines of the passed examples and the initial suggestion.

Setup

Install and import the necessary dependencies:

Don't mind the next cell too much, we need it to detect Colabs and let you upload the SCB file (see below):

Get DB connection

A couple of secrets are required to create a Session object (a connection to your Astra DB instance).

(Note: some steps will be slightly different on Google Colab and on local Jupyter, that's why the notebook will detect the runtime type.)

Creation of the DB connection

This is how you create a connection to Astra DB:

(Incidentally, you could also use any Cassandra cluster (as long as it provides Vector capabilities), just by changing the parameters to the following Cluster instantiation.)

Creation of the Vector table in CQL

You need a table which support vectors and is equipped with metadata. Call it "philosophers_cql".

Each row will store: a quote, its vector embedding, the quote author and a set of "tags". You also need a primary key to ensure uniqueness of rows.

The following is the full CQL command that creates the table (check out this page for more on the CQL syntax of this and the following statements):

Pass this statement to your database Session to execute it:

Add a vector index for ANN search

In order to run ANN (approximate-nearest-neighbor) searches on the vectors in the table, you need to create a specific index on the embedding_vector column.

When creating the index, you can optionally choose the "similarity function" used to compute vector distances: since for unit-length vectors (such as those from OpenAI) the "cosine difference" is the same as the "dot product", you'll use the latter which is computationally less expensive.

Run this CQL statement:

Add indexes for author and tag filtering

That is enough to run vector searches on the table ... but you want to be able to optionally specify an author and/or some tags to restrict the quote search. Create two other indexes to support this:

Connect to OpenAI

Set up your secret key

A test call for embeddings

Quickly check how one can get the embedding vectors for a list of input texts:

Note: the above is the syntax for OpenAI v1.0+. If using previous versions, the code to get the embeddings will look different.

Load quotes into the Vector Store

Get a dataset with the quotes. (We adapted and augmented the data from this Kaggle dataset, ready to use in this demo.)

A quick inspection:

Check the dataset size:

Insert quotes into vector store

You will compute the embeddings for the quotes and save them into the Vector Store, along with the text itself and the metadata planned for later use.

To optimize speed and reduce the calls, you'll perform batched calls to the embedding OpenAI service.

The DB write is accomplished with a CQL statement. But since you'll run this particular insertion several times (albeit with different values), it's best to prepare the statement and then just run it over and over.

(Note: for faster insertion, the Cassandra drivers would let you do concurrent inserts, which we don't do here for a more straightforward demo code.)

Use case 1: quote search engine

For the quote-search functionality, you need first to make the input quote into a vector, and then use it to query the store (besides handling the optional metadata into the search call, that is).

Encapsulate the search-engine functionality into a function for ease of re-use:

Putting search to test

Passing just a quote:

Search restricted to an author:

Search constrained to a tag (out of those saved earlier with the quotes):

Cutting out irrelevant results

The vector similarity search generally returns the vectors that are closest to the query, even if that means results that might be somewhat irrelevant if there's nothing better.

To keep this issue under control, you can get the actual "similarity" between the query and each result, and then set a cutoff on it, effectively discarding results that are beyond that threshold.

Tuning this threshold correctly is not an easy problem: here, we'll just show you the way.

To get a feeling on how this works, try the following query and play with the choice of quote and threshold to compare the results:

Note (for the mathematically inclined): this value is a rescaling between zero and one of the cosine difference between the vectors, i.e. of the scalar product divided by the product of the norms of the two vectors. In other words, this is 0 for opposite-facing vecors and +1 for parallel vectors. For other measures of similarity, check the documentation -- and keep in mind that the metric in the SELECT query should match the one used when creating the index earlier for meaningful, ordered results.

Use case 2: quote generator

For this task you need another component from OpenAI, namely an LLM to generate the quote for us (based on input obtained by querying the Vector Store).

You also need a template for the prompt that will be filled for the generate-quote LLM completion task.

Like for search, this functionality is best wrapped into a handy function (which internally uses search):

Note: similar to the case of the embedding computation, the code for the Chat Completion API would be slightly different for OpenAI prior to v1.0.

Putting quote generation to test

Just passing a text (a "quote", but one can actually just suggest a topic since its vector embedding will still end up at the right place in the vector space):

Use inspiration from just a single philosopher:

(Optional) Partitioning

There's an interesting topic to examine before completing this quickstart. While, generally, tags and quotes can be in any relationship (e.g. a quote having multiple tags), authors are effectively an exact grouping (they define a "disjoint partitioning" on the set of quotes): each quote has exactly one author (for us, at least).

Now, suppose you know in advance your application will usually (or always) run queries on a single author. Then you can take full advantage of the underlying database structure: if you group quotes in partitions (one per author), vector queries on just an author will use less resources and return much faster.

We'll not dive into the details here, which have to do with the Cassandra storage internals: the important message is that if your queries are run within a group, consider partitioning accordingly to boost performance.

You'll now see this choice in action.

The partitioning per author calls for a new table schema: create a new table called "philosophers_cql_partitioned", along with the necessary indexes:

Now repeat the compute-embeddings-and-insert step on the new table.

You could use the very same insertion code as you did earlier, because the differences are hidden "behind the scenes": the database will store the inserted rows differently according to the partitioning scheme of this new table.

However, by way of demonstration, you will take advantage of a handy facility offered by the Cassandra drivers to easily run several queries (in this case, INSERTs) concurrently. This is something that Cassandra / Astra DB through CQL supports very well and can lead to a significant speedup, with very little changes in the client code.

(Note: one could additionally have cached the embeddings computed previously to save a few API tokens -- here, however, we wanted to keep the code easier to inspect.)

Despite the different table schema, the DB query behind the similarity search is essentially the same:

That's it: the new table still supports the "generic" similarity searches all right ...

... but it's when an author is specified that you would notice a huge performance advantage:

Well, you would notice a performance gain, if you had a realistic-size dataset. In this demo, with a few tens of entries, there's no noticeable difference -- but you get the idea.

Conclusion

Congratulations! You have learned how to use OpenAI for vector embeddings and Astra DB / Cassandra for storage in order to build a sophisticated philosophical search engine and quote generator.

This example used the Cassandra drivers and runs CQL (Cassandra Query Language) statements directly to interface with the Vector Store - but this is not the only choice. Check the README for other options and integration with popular frameworks.

To find out more on how Astra DB's Vector Search capabilities can be a key ingredient in your ML/GenAI applications, visit Astra DB's web page on the topic.

Cleanup

If you want to remove all resources used for this demo, run this cell (warning: this will delete the tables and the data inserted in them!):